EU AI Act

The European Parliament, after almost three years of negotiation, has finally passed the AI Act. Just like the GDPR, the Digital Services Act, and the Digital Markets Act, it has been given an explicit extra-territorial scope, meaning that companies from outside the EU catering to the European Market must comply with it and will be subject to the same penal measures as the internal companies. This time, the fine has been capped at 7% of the global annual turnover, rising from the 4% in the GDPR. Given Meta had been fined 1.2 billion euros by the Irish Data Protection Commissioner last May of 2023, this 7% will keep us only waiting until some more big fish are subject to the new provisions of the AI Act.

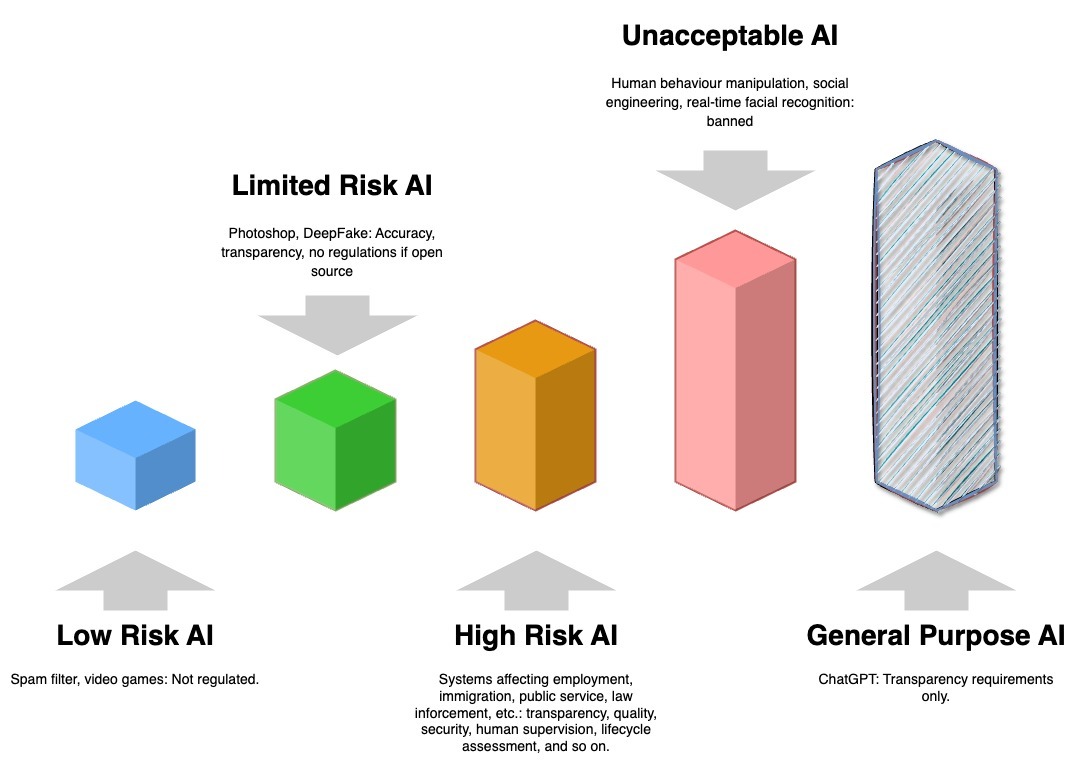

But what is in the AI Act? It approaches AI systems based on the risk they pose, and AI Act’s subsequent drafts had to be radically changed when OpenAI released ChatGPT. Instead of having four categories of AI as originally envisioned, it now has five risk categories, with a special category called “general purpose AI” (GPAI) for large language models (Bard, GPT, LLaMA, etc.), further divided into a special high-impact category depending on how much power they consume.

Right now, the act divides the available AI technologies and models into five groups, each with different responsibilities. Transparency requirements have been explicitly defined as follows: the generated content should be acknowledged as produced by AI, no illegal content should be produced, and the use of copyrighted data should be duly summarized. Below is a table that summarizes the risk categories and their obligations.

| Category | Examples | What the AI Act Says |

| General Purpose AI | ChatGPT, other large language or image models that can be used to create general-purpose chatbots. | -Transparency requirements must be maintained.

-It needs evaluation if it has high impact (if the model uses a lot of computational power, roughly equal to 2000 billion MacBook M1 chips combined). |

| Low or Minimal Risk AI | Video games, email spam filters. | -Not regulated.

-All national laws have been repelled. -They may have non-binding codes of conduct. |

| Limited Risk AI | Image editing software, PDF generators, spreadsheet software, emotion recognition, and limited-use chatbots, but also software that can create fake images or videos (DeepFake). | -Accuracy and transparency must be maintained.

-No regulation if free and open-source. |

| High-Risk AI | AI systems that may affect employment, education, training, border control, law enforcement, public service, legal systems, critical infrastructures, and also products covered by the EU Product Safety legislation, including cars, airplanes, medical equipment, etc. | -Pre-release and lifecycle assessments must be run.

-Transparency, quality, security, and human supervision requirements must be met. -People have the right to file complaints. |

| Unacceptable AI | Human behavior manipulation, social engineering or scoring, real-time facial recognition public spaces. | -Banned. |

Table: The Risk-wise Categorization of AI

Two things of interest are the introduction of supervisory systems (called regulatory sandboxes) at national levels (for each member state), which would ensure that AI systems are developed and fostered under strict oversight from the state before releasing into the market or public service, and the establishment of a single European Artificial Intelligence Board for all-EU oversight. It also has different timelines for the enforcement of different parts, with the unacceptable AI systems phasing out in six months and high-risk ones being regulated within 18 months.

Notably, the act does not come with a list of AI systems in each risk-based category, so the lists can be expanded and retracted as the European Commission and the supervisory authorities deem necessary. It also categorizes deep-fakes as only limited risk, while the US state of Tennessee just passed its ELVIS Act (Ensuring Likeness Voice and Image Security) three days ago to protect musicians from copyright infringement.

Will the AI Act be able to stand the test of time? Or will there have to be more revisions even before the phasing out is complete?